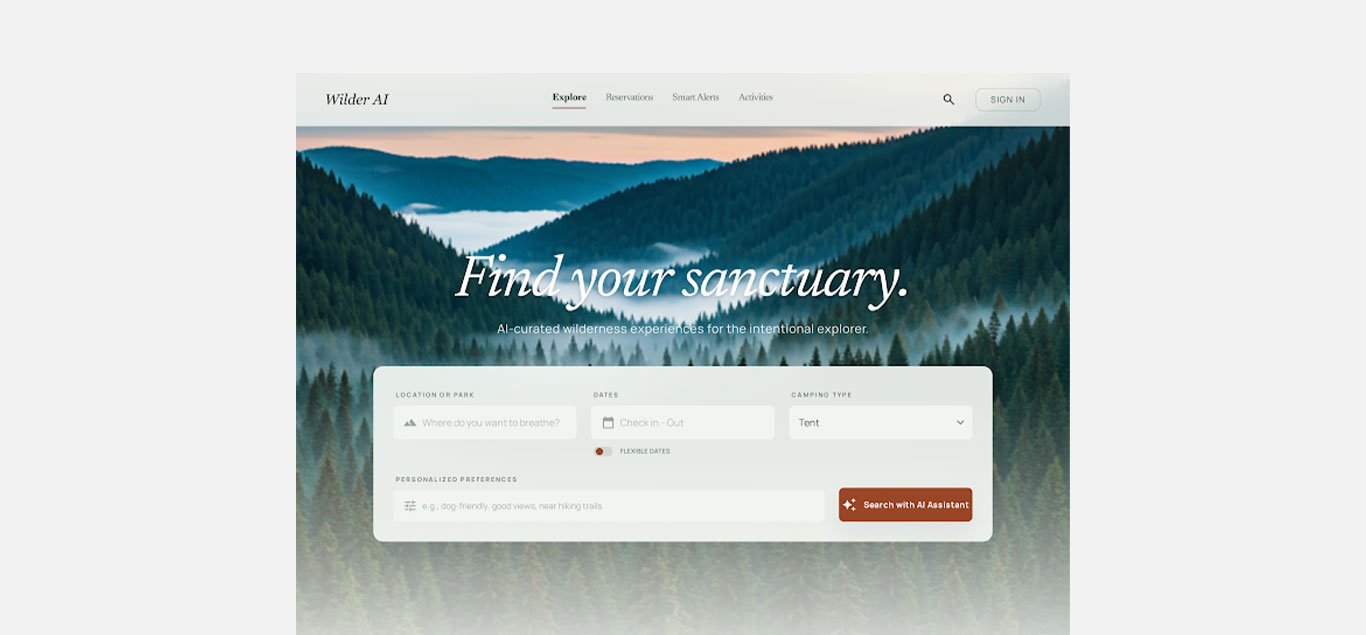

Apply AI-native UX patterns to a real, high-friction product.

California's campsite reservation system forces users through a linear, one-at-a-time browsing experience in one of the most competitive booking environments imaginable. Users cannot compare options side by side, must click through sites and dates sequentially, and often find that by the time they have enough information to decide, the site is already gone.

When a site is found, the details needed to make a confident decision are frequently missing or inaccurate. Views, trail proximity, trailer clearance, noise sources: none of it is reliably surfaced. Users book blind and find out the reality on arrival.

This capstone project for Stanford's UX/UI Design for AI Products certification explored how AI could directly fix these problems through smarter campsite matching, enriched site information, and intelligent availability alerts.

A booking system that punishes its users.

Through semi-structured interviews with 4 participants across distinct camping profiles (family weekenders, retired trailer campers, solo explorers, flexible backpackers) and competitive analysis of ReserveCalifornia, Recreation.gov, Hipcamp, and The Dyrt, I identified a set of compounding pain points:

No quality signals: No ratings, reviews, or reliable descriptions. All 4 participants regularly used external sites like The Dyrt, Reddit, and YouTube to get basic planning information the system should provide.

Blind competition: High-demand campgrounds disappear within minutes of release. Users have no way to know how competitive a given park, date, or site is. The 8am release window feels unwinnable.

No personalization: Users need to already know what they are looking for before starting to book. There is no discovery support, no matching, and no way to express preferences in natural terms.

Constant manual monitoring: Without reliable alerts, users check the site repeatedly throughout the day. One participant described it as a full-time job. Trip abandonment is common when sites are unavailable.

Different lives, same 8am scramble.

I used a mixed-methods approach designed to capture not just task completion but the planning anxiety and workarounds users invent when the system fails them:

User interviews (4 participants): 1:1 semi-structured conversations covering planning rituals, past trips, and frustration points. Participants ranged from casual family campers to experienced trailer campers taking 8-10 trips a year.

Sean

Weekends, Family"Tell me the moment something frees up for my specific weekend."

John & Debbie

Retiree, Flexible"Give me more details. I once stayed next to a weigh station. I never would have booked that if I had known."

Trevor

Weekends, Solo Explorer"Show me what's quiet, shaded, and near a trail."

Esther

Flexible, Friends"Provide a notification so I know when something opens up because I am flexible."

Competitive analysis (4 products): Evaluated ReserveCalifornia, Recreation.gov, Hipcamp, and The Dyrt. Found that no true AI-powered campsite search and booking experience exists. Booking systems provide access but no intelligence; discovery platforms inspire but do not solve booking competition.

AI capability probing: Prompt testing with Claude and GPT to scope what LLMs could actually deliver for structured campsite details, booking insights, and UI pattern generation. Key finding: AI excels at structured output and UX thinking, but real functionality requires API integration.

Four areas where AI directly addresses documented pain points.

Predictive Availability

Surface AI-generated demand forecasts: how competitive is this park on this weekend, when do cancellations typically occur, what are the odds of a site opening in the next 72 hours?

Conversational Trip Matching

Replace filter-and-grid discovery with a conversational intake that asks users what they want in natural terms, showing best-fit campgrounds ranked by match quality.

Smart Cancellation Alerts

An AI layer that learns preferences and proactively surfaces relevant cancellations: not just "something opened up" but "a shaded site opened at San Elijo for the exact weekend you've been watching."

AI-Assisted Trip Context

Enrich each listing with AI-curated information: driving distance, user-contributed site notes, verified dimensions, and site-specific photo tags.

Two rounds of testing, low-fi through high-fi.

Low-fidelity testing (4 participants): Hand-authored AI responses to validate the conversational match flow before build. Five task scenarios covering home screen orientation, AI assistant interaction, search result evaluation, site detail decision-making, and smart alert management.

High-fidelity testing (4 participants): Prototype walkthroughs against real planning tasks. Tested explore and discovery, AI-matched results evaluation, site detail with availability forecasting, and smart alert dashboard interaction.

Summary insights were generated using Claude AI based on user responses, then manually reviewed and finalized. Testing revealed clear structural and interaction feedback at the lo-fi level, while hi-fi testing surfaced emotional and content-level feedback around trust, photos, and labeling.

Three variants exploring the balance between user control and system automation.

Direct Manipulation

FavoredThe user remains fully in control. They input search criteria, the system surfaces notifications when criteria are met, and the user decides whether to complete the booking.

Strength: Maintains transparency and user control, builds confidence in decision-making.

Tradeoff: Still requires effort and fast action from the user.

Interface Agent

The system acts as an autonomous agent that books campsites on behalf of the user when a strong match becomes available.

Strength: Maximizes efficiency by automating the booking process.

Tradeoff: All users expressed discomfort and felt it would be wrong. Full automation could negatively impact trust and perceived fairness.

Mixed Initiative

FavoredBoth the system and the user take turns. The AI monitors and surfaces opportunities; the user makes the final decision.

Strength: Balances efficiency with control. Directly addresses the core pain points of effort, timing, and uncertainty.

Tradeoff: Timing and notification design are critical. Alerts could feel overwhelming if not designed well.

Key design decisions informed directly by user testing.

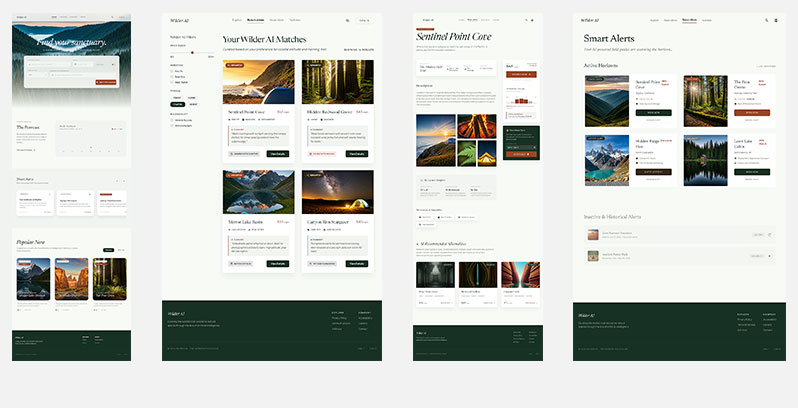

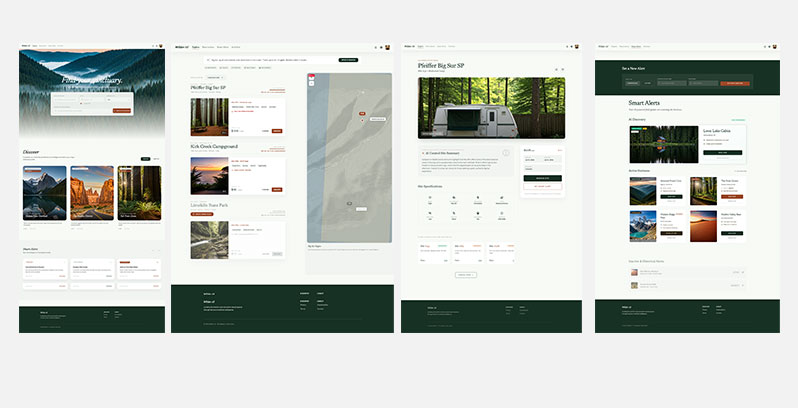

AI-powered search: Moved the Discover section higher in the experience to reinforce that the AI is continuously scanning and surfacing opportunities. Introduced a "Trending" vs. "Near You" toggle to distinguish between public signals and personalized recommendations, increasing transparency and trust.

Search results with explainability: Replaced traditional filters with a conversational input for dynamic refinement. Increased explainability by breaking results down at the campground level ("1 out of 89 available campsites") with clear explanation that the AI is narrowing results based on user inputs. Added an inline map for spatial context.

AI-curated site detail: AI-labeled summary with an explicit "Sourced from..." line, never blended with verified specs. Site specifications (clearance, trail access, noise, views) surfaced as an icon grid. Other available sites within the same campground shown to build trust that the AI is not hiding alternatives.

Smart alerts: Three alert types unified but labeled (Cancellation, Lottery, Availability). Incorporated a human-in-the-loop approach where alerts propose a potential booking action but the system never completes a booking on the user's behalf. Historical alerts remain visible as learning signal for the matching model.

What testing validated.

Smart Alerts removed booking anxiety: participants said they would stop manually checking the site if they trusted the alert would fire. AI-labeled summaries increased rather than reduced trust. Photos were the primary decision driver across all participants, and users preferred concrete scarcity language ("2 sites left") over abstract match percentages.

Principles applied to every AI interaction in the product.

Explainability: Show why results are surfaced (e.g., "1 of 89 campsites"). Users can view more options to validate AI choices.

Transparency: AI-generated summaries clearly labeled and sourced from multiple inputs. Not presented as absolute truth.

Human-in-the-loop: AI suggests, user decides. No auto-booking, to ensure fairness and maintain control.

Predictability: Consistent behavior based on user inputs. Smart alerts reinforce expected system actions.

What this project taught me about designing with AI.

This was my first end-to-end AI product design project, and it reinforced that the hardest design challenge with AI is not what the technology can do, but what users will trust it to do. Every participant rejected full automation. The winning pattern was mixed initiative: AI handles the monitoring and matching, the user makes the call.

I also learned the importance of distinguishing between experience prototypes and technical prototypes when working with AI. Prompt-generated outputs feel plausible but can mask the gap between what is simulated and what would require real API integration to deliver. Being honest about that distinction in testing is essential.

The project deepened my ability to integrate AI tools into my own design workflow. I used Claude for insight synthesis, Granola for interview capture, Figma Make for lo-fi wireframes, and a range of rapid prototyping tools (Lovable, Cursor, v0, Replit, Stitch) across the build.